How are PRs considered "qualified" in AI Impact metrics?

Last updated: February 4, 2026

Span's AI Impact metrics show how AI is shaping your engineering organization by cohorting code based on AI “dosage” — the percentage of code likely written by AI versus humans. It’s powered by a proprietary ML model that classifies AI-generated and human-written code with 95% accuracy, providing reliable insights into adoption, productivity, and code quality.

Why We Qualify PRs in the AI Impact Report

The AI Impact report focuses on "qualified" PRs — those containing code in languages our AI detection system can analyze. This approach provides you with the most accurate and actionable insights about AI coding assistant usage in your organization.

Qualified PRs Explained

Not all PRs are included in calculations. There are two qualification levels:

Level 1: AI Code Ratio Qualified

PR must have at least one code chunk in a supported language. Each PR's lines are grouped into contiguous chunks. We only analyze added and modified lines of code and only chunks that have at least 700 characters.

Current supported languages include:

Python

Typescript

Javascript

Ruby

Java

C#

Go

Kotlin

Level 2: AI Dosage Analysis Qualified

At least one chunk in a supported language AND

At least 30% of non-ignored lines in supported languages

Why? PRs with too little supported code produce unreliable AI detection results. The 30% threshold ensures data quality.

Tip: Turn on "Show Unknown PRs" to see what's being filtered out.

The Challenge

Not all code can be analyzed for AI detection. Span's AI detection technology supports major programming languages, but some files and formats fall outside our analysis capabilities:

Binary files, images, and compiled assets

Certain configuration formats or proprietary languages

Non-code changes (pure documentation, markdown files, etc.)

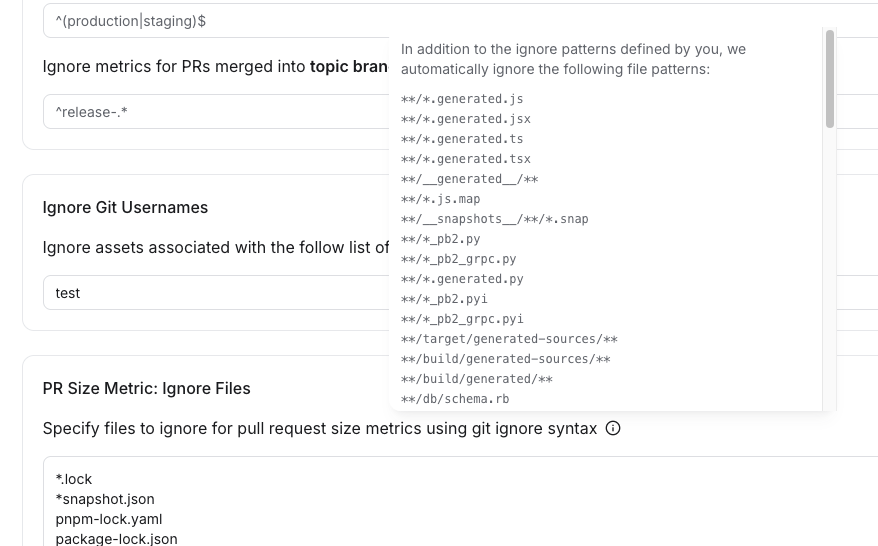

Not all code should be analyzed either: frequently generated files are excluded from all PR metrics and are ignored for AI analysis: dependency files, snapshots, etc (see screenshot below for full list). Ignore settings can be configured in Settings --> Metrics.

Why Qualification Matters

Qualified PRs give you an accurate baseline. If we included PRs with unanalyzable code in our calculations, it would artificially dilute your AI adoption metrics. For example:

A team does 100 merged PRs

80 contain analyzable code (Python, TypeScript, etc.)

20 contain only unanalyzable content (images, binaries, unsupported formats)

AI tools generated 40% of the analyzable code

Without qualification, this would show as 32% AI adoption (40% × 80/100). But that's misleading — 100% of the code that could use AI tools had 40% AI adoption. The 20 PRs with unanalyzable content couldn't benefit from AI coding assistants anyway.

Two Views for Complete Context

We provide both perspectives so you can choose the right lens:

1. Qualified PRs View (Recommended)

Shows AI adoption among code where AI tools can actually help

Best for: Understanding true AI coding assistant utilization and ROI

This is the default view

2. All PRs View

Shows a conservative estimate across all engineering work

Best for: Understanding AI's impact on your entire development pipeline, including work that can't be AI-assisted

To include unqualified PRs, click "display" and then toggle on "Show unknown PRs"

A PR is considered "qualified" when it meets these criteria:

Status: Merged - The PR must be merged (or reverted), not open or closed without merging

Contains analyzable code - The PR must include code in programming languages that Span supports for AI detection analysis. This is the key qualification criterion - the PR must have "classified lines" that Span can analyze.

Authored by a developer - The PR author must be classified as a development contributor

The main distinction of a "qualified" PR is that it contains code in supported languages that Span's AI detection system can analyze. PRs with only unsupported languages, or PRs with no code at all (like documentation-only changes in formats Span doesn't analyze), would be merged but not "qualified."

Why This Matters

In the AI Impact report UI, you'll see something like:

"n = X qualifying PRs out of Y total PRs in period"

Where:

X = merged PRs with analyzable code (qualified)

Y = all merged PRs in the period (including those without analyzable code)

The two calculation modes in the report use these different baselines:

Mode 1 (Qualified): AI% = AI lines / lines in analyzable code only

Mode 2 (All PRs): AI% = AI lines / all lines added across all merged PRs

This allows you to see either a focused view (among code we can analyze) or a conservative estimate (among all merged work).

The Bottom Line

Qualification ensures you're measuring AI adoption fairly and accurately — focusing on the work where AI coding assistants can actually contribute. This gives you reliable insights for investment decisions, team comparisons, and understanding real AI impact on your development velocity.